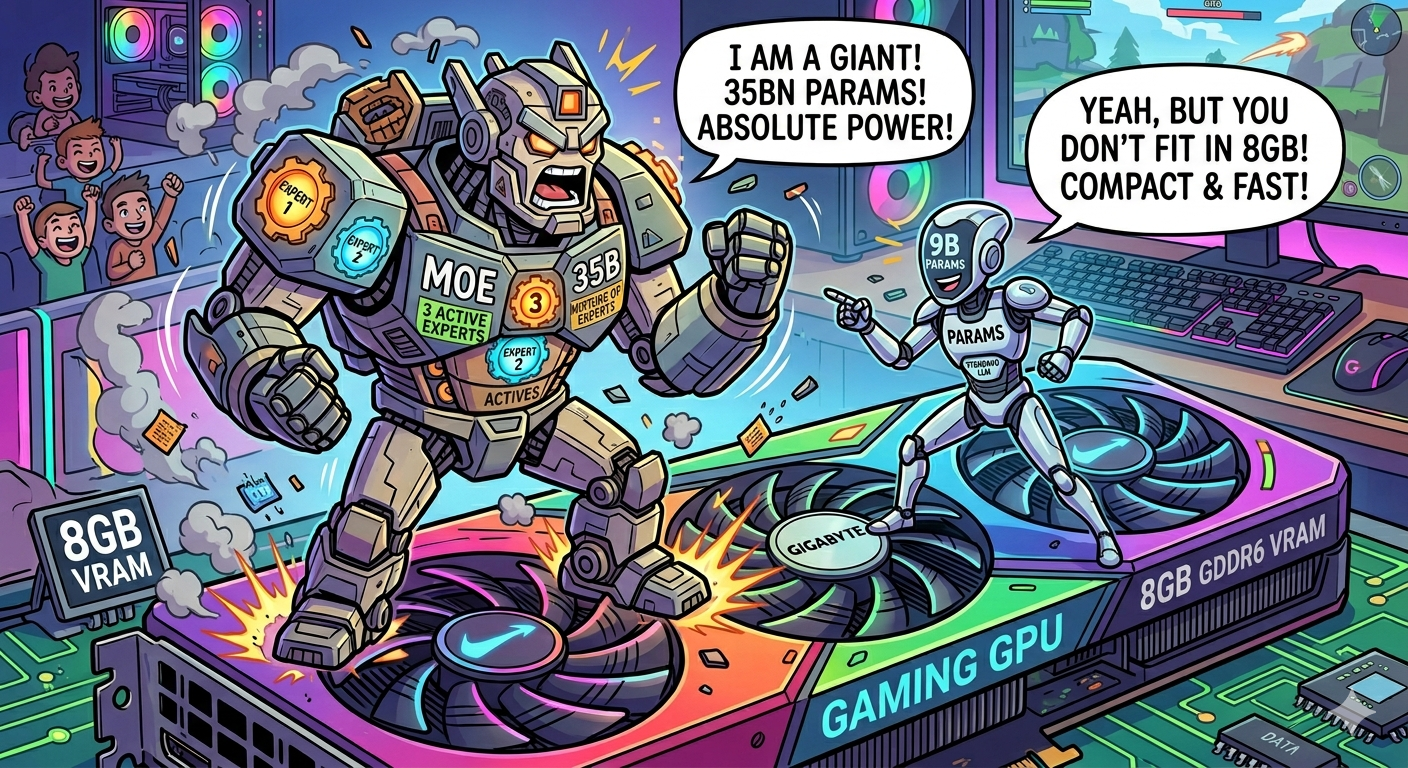

MoE vs Dense on a budget GPU: How Qwen3.6-35B lost to a 9B Model from the same family

I benchmarked Qwen3.6-35B-A3B with CPU offloading against Qwopus-9B on 26 coding tasks. The MoE model has 3 billion active parameters — fewer than the dense 9B. It still got crushed. Here is why.